One HAproxy to rule them all

Published on Monday, 01 January, 2024Table of content

Intro

Deploying services in containers is great, but connecting to them less so. You forward a port to your network and then you connection string is some odd monster with weird port number that doesn't make any sense.

You could solve that in multiple ways, like, having an IP address per service, and expose on the correct port, but that would just mean you have countles addresses on a machine. And then you have allow most containers to bind on privileged ports, since you wan't to run that web app on port 443.

The alternative is to have a load balancer in front of your services. For this task I'm using HAproxy.

It will allow us to listen on single IP and port and direct traffic to desired service based on parameters like hostname and path in request. While typicaly you would use it to balance http traffic, we'll go a bit further and set it up ready for tcp as well, allowing us to use it for things like mail and ssh.

The boring bits

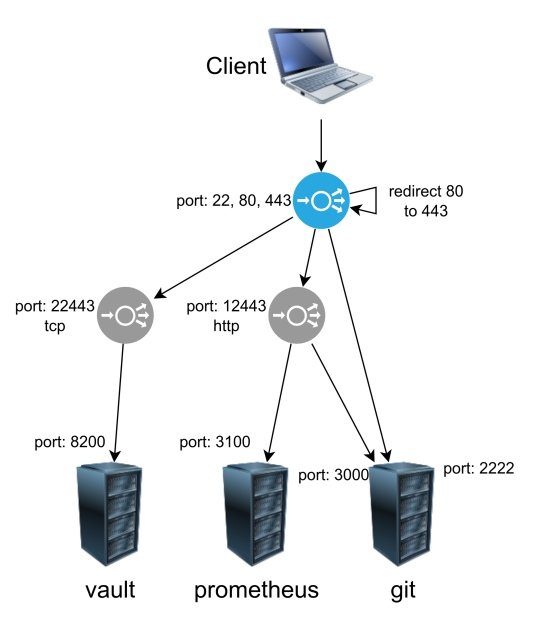

Let's illustrate our goal:

The haproxy service listens on ports 22, 80 and 443. Since we don't want any unencrypted traffic outside our network, port 80 will be redirected to 443.

Anything on port 22 will be sent to appropriate backend. In this case, let's imagine we have a git server listening on ports 22 and 443.

The only thing remaining is traffic on port 443. Handling this traffic is layered, first, we determine if traffic is http or tls. Then we send that traffic to appropriate backend for each protocol.

What is listening on those backends? Well, it's haproxy again, listening on 12443 for https and on 22433 for tcp. Now that we are at the right backend, we can finally send traffic to our desired application.

You might wonder: "Why do I even need all this complexity? All my traffic is https anyway.". Short answer: "Trust me.". Long answer, it depends. If your use case is really simple, maybe you don't. But in this case, you have to look at the two services.

We have vault and prometheus. vault is a security sensitive service, and it uses end-to-end encryption, meaning that the server itself is serving traffic with https. On the other hand, prometheus listens to http, and doesn't have tls enabled.

You could handle this with only one layer, by reencrypting traffic. So traffic comes to haproxy, gets decrypted (tls termination), then it's sent to vault encrypted with vaults certificate.

The issue here is that you are needlesly decrypting the traffic on haproxy, which is both expensive to compute, and less secure than just sending the traffic to vault.

There is no issue for prometheus, since traffic between haproxy and prometheus isn't encrypted in any case.

This more complex setup let's you have the three standard configurations for tls on load balancers:

edge- encrypted from client to LB, plaintext from LB to servicereencrypt- encrypted from client to LB with one certificate, reencrypted from LB to service with second certificatepassthrough- encrypted from client to service

Now that we have the basics down, we can start.

Installation

As is tradition, we are starting with podman. You can use any other compatible runtime for containers. On Rocky Linux server that I'm using, installing podman is as simple as running:

# dnf install podman

But at this point it should be equally simple on any distro.

With podman installed, create the folder structure for persistent files. For me, everything for containers is in /containers folder.

# mkdir -p /containers/haproxy/certs

# mkdir -p /containers/haproxy/haproxy.cfg

We will be using the neat feature of haproxy to read configs from a folder, allowing us to separate config into multiple files.

Configuration

We need to configure several things for this to work:

- main

haproxyconfig - main

frontendandbackend sshfrontendandbackendtlsfrontendandbackendhttpfrontendandbackend- service

frontendandbackends

Certificates and DNS

Now, before we begin with all the config, there is two thing you will need: certificates for all the services behind haproxy, and some way to configure dns. If you don't have any certificates, you can check the vault guide and create some. And there is also a guide for dns.

I would recommend a wildcard certificate for this exercise. Something like *.example.tld, and putting everything into subdomains, so let's say:

git.example.tldvault.example.tldprometheus.example.tld

And your DNS should point all those addresses to same IP address, where haproxy is listening.